EXTENDED BACKUP POWER

Reliable, Clean and Quiet Backup Power for Residential and Industrial On-Grid Users

GRID INDEPENDENT POWER

Complements Solar power to provide 24/7/365 Grid Independent Power for Residential and Industrial Applications

Application Overview

Upgen products are specifically designed to address a range of applications that require dependable, affordable energy.

First, it should be noted that Upgen products are always deployed with a battery storage device. This battery serves as an electrical “buffer” and performs the function of load-following for the overall system, handling all power peaks and valleys encountered. The battery also provides start-up and shut-down power for the Upgen. The Upgen unit’s primary role is to produce power at a constant rate (at the average daily power rate) and to keep the battery fully charged, which in turn provides the overall system with the energy it needs to fulfill its function.

Depending on the specific application requirements, Upgen products will also be deployed with other pieces of hardware and software including: solar panels, inverters, switchgear, and system control software.

Upstart Applications fall into the following two main categories – Residential and Industrial. Please see the sections below for more details on each.

RESIDENTIAL

Many Residential customers who install Solar/PV units soon realize that these systems do not function at all when the grid goes down. Without some sort of storage or local generation, typical solar installations are not able to power a home without the grid being fully operational and therefore provide no form of Backup Power.

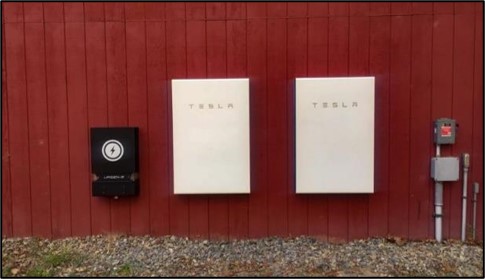

In recent years, some solar customers have been adding energy storage (batteries) to enable their solar-based systems to operate during grid outages. While adding storage can allow a solar installation to work when the grid is down, the duration of system operation during a grid outage is limited by the size/capacity of the installed battery. Most typically sized storage systems will cover the energy requirements of a home for only part of a day. But to get much longer than 24 hours of backup coverage, a customer would need to invest in an oversized and a very expensive battery system – not a good economic choice.

A far better way to get extended backup for Residential applications is to complement solar panels with an Upgen fuel cell generator and an appropriately sized battery storage unit. This “triple-hybrid” solution provides inexpensive solar power for the majority of the time and also provides unlimited backup capability as needed courtesy of the Upgen unit (to cover grid outages and also shortfalls in solar panel output). If this system is sized appropriately, the combination of solar, fuel cell, and battery can allow a residential customer to be completely grid-independent (not needing the grid at all on a 7/24/365 basis). And in certain locations, the Levelized Cost of Electricity (LCOE) of this grid-independent triple-hybrid system will be lower than the energy pricing from their grid operator.

CONFIGURATION 1

GRID BACKUP

Residential Backup with Solar

Coupling an Upgen fuel cell unit with a Solar/PV system and a small battery. This can be a NEW installation or it can be a RETROFIT to an existing Solar/PV system.

CONFIGURATION 2

GRID BACKUP

Residential Backup without Solar

For homes with small to moderate daily energy needs, a Backup Power capability can be provided by installing just an Upgen unit and a moderate storage battery – in this configuration there would be no Solar installed. This Upgen configuration provides unlimited Backup capability for households in a manner that is better than conventional internal combustion engine generators (similar total costs, much lower emissions and noise, less maintenance, higher efficiency, less fuel).

INDUSTRIAL

Across the Industrial world, there are many mission-critical applications that simply cannot afford to be interrupted by grid outages. These applications range from telecommunications towers and pipeline monitors to a myriad of IoT devices. Upstart’s Upgen products are very well-suited to provide reliable and effective Backup Power in these instances.

The function of an Upgen Industrial solution for Backup is simple – when the primary power source (typically the grid) is interrupted, an Upgen Backup system steps in and provides immediate and effective long-term Backup Power for as long as it is needed. The Upgen system for Backup Power is reliable, cost – effective, very low emissions, low noise, and runs on available propane or natural gas. On these dimensions and more, an Upgen backup system is a viable and attractive alternative to internal combustion engine (ICE) generators.

CONFIGURATION 1

ON-GRID BACKUP

Industrial BackUp

CONFIGURATION 2

OFF – GRID POWER

Industrial Grid Independent/Triple Hybrid

The Upgen fuel cell unit can also be utilized in conjunction with Solar/PV and Storage to provide both Primary and Backup Power to Industrial locations. This “triple hybrid” configuration is particularly attractive for a) off-grid locations, b) locations where establishing a grid connection is expensive, and c) locations with high electricity rates (this configuration can be quite competitive on an LCOE basis with traditional grid power rates).

UPGEN ADVANTAGES/FIT

Fit with Upgen: The following table will help identify the characteristics of Industrial Power applications that align well with current Upgen products. If your company has applications that align well with Upgen products, please contact us to discuss.

Upgen is a GOOD FIT in these situations:

- The daily average energy consumption is under 24 kWh per day (or the desired circuits to be backed up are under 24kWh per day)

- Existing natural gas line or standard propane fuel tank available

- Heating and cooling are modest/consistent or are fueled by natural gas or propane units

- Grid power is not available at the location or is very expensive

Upgen is NOT A GOOD FIT in these situations:

- The daily average consumption of the desired circuits to be backed is more than 24 kWh per day (*note Upstart will likely have higher powered units in the future)

- No access to natural gas or propane

- Heating and air conditioning are substantial and highly seasonal and are electrically driven

OTHER APPLICATIONS

Upstart Power products can certainly be deployed into specific applications other than those specifically listed above. Please contact us if you would like to discuss applications that might align with the current or future capabilities of our Upgen product line.

POWER VS. ENERGY

What is the difference between a Kilowatt and a Kilowatt-Hour?:

A kWh is a measure of energy, a kW is a measure of power.

But what are energy and power?

Physics textbooks will explain that Energy is the “capacity to do work”, but in the case of residential electricity, it might be easier to think of Energy as the total of what we need to buy to run our households.

Power is the rate at which energy is generated or used. For our purposes, imagine boiling a kettle. It takes a certain amount of energy to boil water (this is actually the definition of a calorie, but we’ll leave that for another day). If we want to boil the water faster, then we need to add more power to the kettle.

In equation terms, we can express the relationship between Energy, Power, and Time as follows:

Energy = Power x Time (or kWh = kW x h).

Another example might help with the explanation. The average solar panel installation in America has a power rating of 6kW. The average home has a typical energy consumption of about 30kWh a day, so we can work out theoretically how many hours of sunshine we need to provide all the energy the home needs as follows:

Time = Energy / Power

Time = 30kWh / 6kW

Time = 5hrs

This calculation tells us that the average American home equipped with solar panels should have the ability to produce all of the required energy from the sun, provided the sun shines for at least 5 hours a day.

In actual fact, the relationship is more complicated than this. The power output of solar panels is sensitive to the angle of the sun’s rays and accordingly doesn’t produce the maximum power output throughout the day even in consistent sunshine. The power output of solar panels is also sensitive to any barriers between the panel and the sun, for example, cloud, snow, etc. Finally, the energy requirement of the home does not typically coincide with the peak power output of the solar panels.